Autonomous robots collaborate to explore and map buildings

Advanced autonomous robots are being developed by a team from the Georgia Institute of Technology, the University of Pennsylvania and the California Institute of Technology/Jet Propulsion Laboratory (JPL). The program vision is for collaborative teams of tiny robots that could roll, hop, crawl or fly just about anywhere, carrying sensors that detect and send back information critical to human operators.

Advanced autonomous robots are being developed by a team from the Georgia Institute of Technology, the University of Pennsylvania and the California Institute of Technology/Jet Propulsion Laboratory (JPL). The program vision is for collaborative teams of tiny robots that could roll, hop, crawl or fly just about anywhere, carrying sensors that detect and send back information critical to human operators.

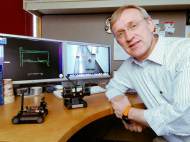

“When first responders – whether it’s a firefighter in downtown Atlanta or a soldier overseas – confront an unfamiliar structure, it’s very stressful and potentially dangerous because they have limited knowledge of what they’re dealing with”, said Henrik Christensen, a professor in the Georgia Tech College of Computing and director of the Robotics and Intelligent Machines Center there. “If those first responders could send in robots that would quickly search the structure and send back a map, they’d have a much better sense of what to expect and they’d feel more confident.”

The ability to map and explore simultaneously represents a milestone in the Micro Autonomous Systems and Technology (MAST) Collaborative Technology Alliance Program – a five-year program led by BAE Systems. They came up with wheeled platforms which measure about a square decimeter (one square foot). But MAST researchers are working toward platforms small enough to be palm-held.

The robots have navigation technology developed by Georgia Tech, with vision-based techniques from JPL and network technology from the University of Pennsylvania. In the experiment, the robots perform their mapping work by using a video camera and a laser scanner. Supported by on-board computing capability, the camera locates doorways and windows, while the scanner measures walls. In addition, an inertial measurement unit helps stabilize the robot and provides information about its movement.

Data from the sensors is integrated into a local area map that is developed by each robot using a graph-based technique called simultaneous localization and mapping (SLAM). The SLAM approach allows an autonomous vehicle to develop a map of either known or unknown environments, while also monitoring and reporting on its own current location. When GPS is active, human operators can use it to see where their robots are. But in the absence of global location information, SLAM enables the robots to keep track of their own locations as they move.

“There is no lead robot, yet each unit is capable of recruiting other units to make sure the entire area is explored”, said Christensen. “When the first robot comes to an intersection, it says to a second robot, ‘I’m going to go to the left if you go to the right.'”

Christensen expects the robots’ abilities to expand beyond mapping soon. One capability under development by a MAST team involves tiny radar units that could see through walls and detect objects behind them. Infrared sensors could also support the search mission by locating anything giving off heat. In addition, a MAST team is developing a highly flexible “whisker” to sense the proximity of walls, even in the dark.

The processing team is designing a more complex experiment for the coming year to include small autonomous aerial platforms for locating a particular building, finding likely entry points and then calling in robotic mapping teams.

In addition to the three universities and BAE Systems, other MAST team participants are North Carolina A&T State University, the University of California Berkeley, the University of Maryland, the University of Michigan, the University of New Mexico, Harvard University, the Massachusetts Institute of Technology, and Daedalus Flight Systems.

Leave your response!