US Navy develops robot Octavia for more natural human-robot interaction

The number of various army robots is rising, whether those are scouting drones, bomb squad robots, search and rescue robots or assault robots. Most of the mentioned robots are remotely controlled with small interference from their autonomous systems, however, the US Navy Center for Applied Research in Artificial Intelligence (NCARAI) is working on more natural human-robot interaction where robots won’t demand as much attention as they currently do.

The number of various army robots is rising, whether those are scouting drones, bomb squad robots, search and rescue robots or assault robots. Most of the mentioned robots are remotely controlled with small interference from their autonomous systems, however, the US Navy Center for Applied Research in Artificial Intelligence (NCARAI) is working on more natural human-robot interaction where robots won’t demand as much attention as they currently do.

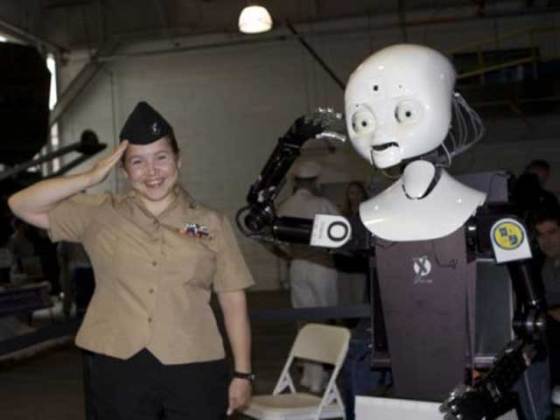

NCARAI’s latest attempt at an easy-to-relate-to robot, named Octavia, was presented to the public for the first time recently in New York City. If you are our regular reader and Octavia looks familiar, it’s because we already wrote about a robot Octavia is based on – MDS robot Nexi.

Octavia rolls around on a wheeled Segway-like base with added training wheels which serve as additional help in robot’s balance. Its Artificial Intelligence (AI) isn’t quite developed yet, but the developers are working on improvements in order to make its natural language software able to fully converse with humans. It has both laser and infrared rangefinders, color CCDs in each eye, and four microphones in its head.

The robot has a fully-articulated head, arms and hands. In order to make it more natural (and in some occasions a bit creepy), robot’s face has moving mouth, eyeballs, eyelids and eyebrows. Although those features might seem unnecessary, they are helpful in communication because humans know did the robot understand the order according to its expression.

Thanks to its Fast Linear Optical Appearance Tracker (FLOAT) system, Octavia can track the position and orientation of complex objects (such as faces) in real time, from up to 3 meters (10 feet) away. Head Gesture Recognizer looks out for head nods and shakes, while Set Theoretic Anatomical Tracker (STAT) tracks head, arm and hand positions. All this data is sent to a VLAD Recognizer, which tries to figure out what the person is doing or indicating via their gestures. The robot learns through NCARAI’s “modified cognitive architecture” called ACT-R/E, which is designed to mimic the methods in which people learn.

Leave your response!