SCRATCHbot robot mimics rats and navigates with whiskers

After their work on Whiskerbot, a group of researchers from the University of Sheffield and the Bristol Robotics Lab has created the SCRATCHBot (Spatial Cognition and Representation through Active TouCh), which uses its plastic whiskers in a sweeping back and forth motion to find its way round, much like a real rat. Many rodents have the ability to actively move their facial whiskers (vibrissae) as they explore their environment. This active movement, combined with the superb tactile sensitivity of each whisker, gives the rodents a superior sensory advantage in occupying and hunting in environments where visually dominant predators poorly perform.

After their work on Whiskerbot, a group of researchers from the University of Sheffield and the Bristol Robotics Lab has created the SCRATCHBot (Spatial Cognition and Representation through Active TouCh), which uses its plastic whiskers in a sweeping back and forth motion to find its way round, much like a real rat. Many rodents have the ability to actively move their facial whiskers (vibrissae) as they explore their environment. This active movement, combined with the superb tactile sensitivity of each whisker, gives the rodents a superior sensory advantage in occupying and hunting in environments where visually dominant predators poorly perform.

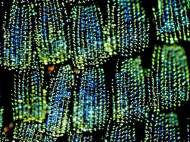

After experimenting with glass-fibre they adopted abs plastic as it is much closer to the stiffness of real rat whiskers. Hall effect sensors which can measure the movement of small magnets bonded to the base of each whisker are used for the larger whiskers which move (known as the macro-vibrissae). Glass-fibre rods bonded to the casing of small microphones embedded in a rubber substrate are used to mimic the smaller whiskers on the front (micro-vibrissae). The SCRATCHBot’s 18 whiskers move back and forth five times per second providing the data needed for the robot’s movement since the robot has no vision, proximity or auditory sensors.

All the yellow components of the robot in the photos above were designed and built in laboratory by using one of their rapid prototyping machines (3D printers). The whisker columns, located on either side of the “head”, are actuated by brushed DC motors and controlled locally by embedded micro-controllers. The “neck” was built by Elumotion Ltd. and consists of brushless DC motors which give 3 degrees of freedom to the head (pitch, yaw and elevation).

The 3 motor drive units on the “body” were designed and built in the laboratory and provide independent drive and steering through 180 degrees, again using brushless DC motors. As with the whisker columns, all motors are controlled locally by separate embedded micro-controllers which receive their desired velocity or position profiles via a CAN (Controller Area Network) bus using the same protocol as is used in most modern cars.

The “brain” of the robot is a combination of FPGA (Field Programmable Gate Array) based and PC based neural models, which issue the motor command and process the sensory input from the whisker array. Therefore, the robot does not send data back to a computer and all processing is done on the platform in real-time. At the end of each experimental run of the robot all the sensory data and robot odometry can be uploaded remotely for further analysis. Since the robot is very close to the limit of its performance they are designing a small asynchronous wireless board which can broadcast the whisker sensory data for external processing.

The technology demonstrated on the robot, namely the active touch sense and the ability to explore an environment in a non-visual way, could be exploited further, and rescue operations is one possible application domain. Other applications which may benefit from this technology could be in the inspection of large fluid filled tanks found in the nuclear industry or inspection of pipes or conduits filled with dirty fluids or for textiles quality inspection, the point here being that the robot was not specifically designed for search and rescue operations. Since there were no plans to exploit this robot commercially, the robot evolved into its next generation named BIOTACT (BIOmimetic Technology for Vibrissal ACtive Touch).

The robots are smarter then I thought