iFeel_IM! adds physical hugs to your virtual conversation

Wheatear you use instant messaging to keep in touch with people you love or cooperate, or use it to keep the pesky people at distance you had to be introduced to the emoticons or smileys. A husband-and-wife team of scientists based in Japan felt emoticons weren’t enough for people to express and came up with iFeel_IM! – a wearable robot which enables you to really reach out and touch someone through the Internet.

Wheatear you use instant messaging to keep in touch with people you love or cooperate, or use it to keep the pesky people at distance you had to be introduced to the emoticons or smileys. A husband-and-wife team of scientists based in Japan felt emoticons weren’t enough for people to express and came up with iFeel_IM! – a wearable robot which enables you to really reach out and touch someone through the Internet.

The proof-of-concept robot, dubbed iFeel_IM! (“I feel therefore I am”), was presented at the first Augmented Human International Conference, held in the French Alps ski resort of Megeve. A two-day gathering of engineers and scientists, many from Japan, compared notes on cutting edge research in a field called augmented reality, the realtime enhancement of experience through virtual, interactive technology.

Dzmitry Tsetserukou, an assistant professor at Toyohashi University of Technology in Japan, said his aim was to boost feeling, to add a human-like sense of touch to the incorporeal ether of cyberspace. Five years in the making, the device aims to inject a little physicality into online chatter.

“We are steeped in computer-mediated communication – SMS, e-mail, Twitter, Instant Messaging, 3-D virtual worlds – but many people don’t connect emotionally”, he said in an interview. “I am looking to create a deep immersive experience, not just a vibration in your shirt triggered by an SMS. Emotion is what gives communication life.”

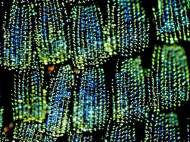

For now, his prototype robot is a collection of sensors, small motors, vibrators and speakers woven into a series of straps similar to a parachute harness, minus the parachute. Connected to a computer, the device can simulate several types of heart beat, a realistic hug, the tickling sensation of a butterfly stomach, and a tingling feeling along the spine. It can also generate warmth.

Software written by his colleague (and wife) Alena Neviarouskaya, a researcher at the University of Tokyo, finds the common phrases embedded in written text and triggers the appropriate touch sensation in the robot in realtime. It is able to distinguish joy, fear, anger and sadness with 90 percent accuracy, and parse more complex emotions (adding shame, guilt, disgust, interest and surprise) with a precision of nearly four out of five times.

Subjects tested the system in the online, three-dimensional environment known as Second Life, inhabited by avatars manipulated by individuals sitting before their computers. In a demonstration, two people wearing iFeel_IM! robots communicated at distance through the medium of their avatars.

Tsetserukou compared the system to the blockbuster film Avatar, and the film Surrogates, set in a future when humans stay at home plugged into a cocoon while their healthier, more handsome doppelgangers venture forth into the real world. Although he added that this could be a mobile system integrated into a suit or jacket in a not so distant future, there is a question of a real need for such products. You could even add pheromones and more complex interactions but is that really necessary in healthy relationships?

awesome information i like steeped in computer-mediated communication – SMS, e-mail, Twitter, Instant Messaging, 3-D virtual worlds – but many people don’t connect emotionally”, he said in an interview. “I am looking to create a deep immersive experience, not just a vibration in your shirt triggered by an SMS. Emotion is what gives communication life.”