inFORM system – morphing relief as a Tangible User Interface

A group of researchers at MIT developed a prototype of a Tangible User Interface (TUI) with ability to dynamically create user interfaces by altering its layout. Named inFORM, the system id built on top of grid-like shape-changing display. It could make use of the way we perceive objects to create an intuitive interface with logical constraints of ways we are able to interact with it.

A group of researchers at MIT developed a prototype of a Tangible User Interface (TUI) with ability to dynamically create user interfaces by altering its layout. Named inFORM, the system id built on top of grid-like shape-changing display. It could make use of the way we perceive objects to create an intuitive interface with logical constraints of ways we are able to interact with it.

In order to mediate interaction, the researchers plan to use shape-changing displays in three different ways to: provide visual feedback in order to suggest logical interactions (affordances) through shape change, restrict by guiding users with dynamic physical constraints, and manipulate by actuating physical objects.

To explore these techniques and interactions, the researchers developed the inFORM system – a relief (2.5D) shape display that enables dynamic affordances, constraints and actuation of passive objects. Bear in mind that current shape displays still remain limited in scale and cost, so observe this project as a spark that might inspire further research in this area.

What is inFORM system?

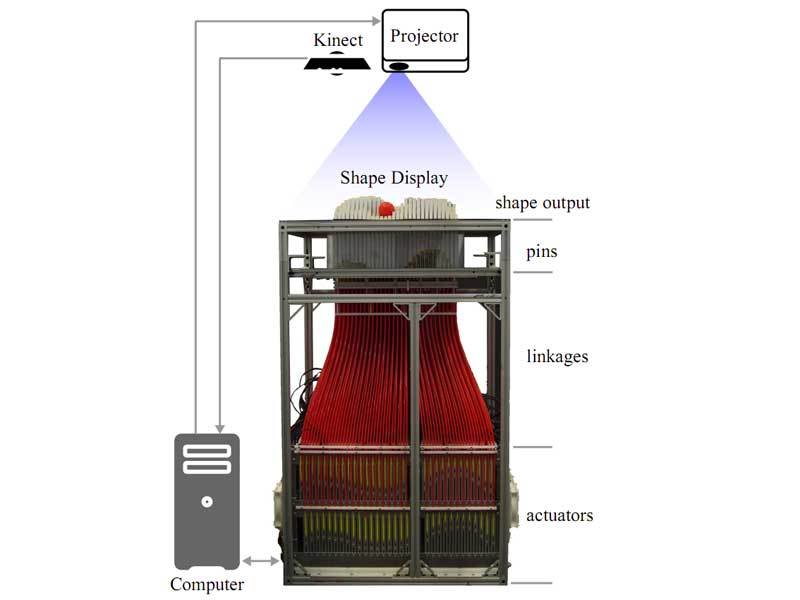

The system supports fast 2.5D actuation, malleable input, and variable stiffness haptic feedback. To achieve this, the system uses a grid of 30×30 motorized white polystyrene pins. Each pin 9.525 square millimeter (0.0147637795 square inch) footprint, with 3.175 mm (0.125 inch) inter-pin spacing, and can extend up to 10 cm (roughly 4 inches) from the surface.

In whole, the height of the system is 1.1m (3.6 feet) since pins have rods which connected them to with an actuator able to push or pull them. Aside servoing the position of pins, microcontrollers read the linear positions and allow user input. Controllers have the capacity to enable haptic feedback and variable stiffness in future variations of the inFORM system. Each pin can exert a force that can lift up to 100 grams (3.52 ounces) of weight.

The system created by Professor Hiroshi Ishii and his students at the MIT Tangible Media Group relies on a Microsoft Kinect overhead depth camera to track user’s hands and surface objects. Static background image of the table surface with the surface’s real-time height map are combined to form a dynamic background image that is used for subtraction and segmentation.

The 3D finger tip coordinates are transformed to surface space, and an arbitrary number of finger tip contacts can be tracked and detected to trigger touch events. For object tracking, we currently rely on color tracking in the HSV space. However, other approaches to object tracking would be straightforward to implement. Objects and finger positions are tracked at 2 mm resolution in the 2D surface plane, and at 10 mm in height.

What can inFORM system do?

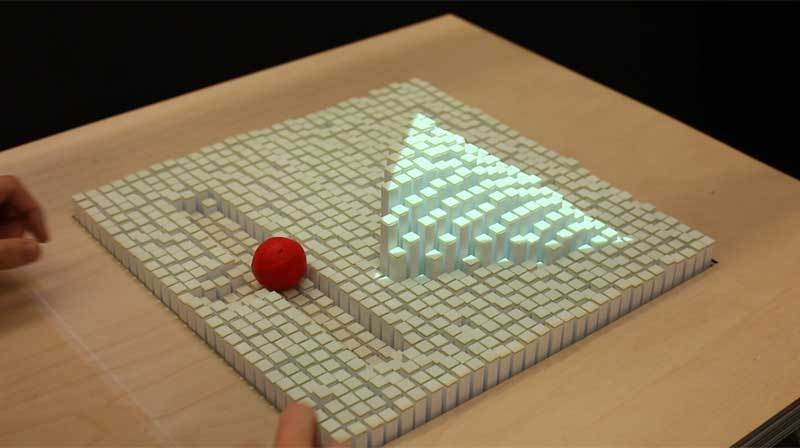

Aside representing shapes and relief, the system could be used for information interaction based on tokens – objects or projections on the systems’ surface. Pins can be used to manipulate objects by moving them in X and Y axis or lifting them in Z axis. Objects can also be rolled, flipped or tilted. This feature could be used to orient an objects’ surface towards the user. For instance, when a phone is ringing or you are watching multimedia, the surface can tilt to provide a better viewing angle.

Faster actuation could be used to create in-air movement by essentially throwing items at desired angles. If the object is intended to land on the inFORM surface, sufficient tracking could enable the control to dampen impact and avoid subsequent bounces. Researchers managed to launch a 7g ball with 2 cm diameter, approximately 8 cm above the maximum pin height of the surface.

Vibrations by the actuators can be used to provide haptic feedback to the user or cause an object to shake in order to draw attention to it. Other natural interaction features of the system are referred to as slots and wells by the researchers. Slots are grooves which constrain the direction in which a user can move a token. Wells can transform in size and shape to adapt to the size of the token, and are used to define a state or move tokens outside the users reach.

However, trials of the system in 3D Model Manipulation application reveled that the users sometimes struggled with physically overlapping content and UI elements. You can notice from the video bellow that the ball located on the inFORM surface doesn’t act quite the same as the ball in the basket. MIT researchers believe that these problems occur due to the limited resolution of the shape display hardware and the software not adapting well to content changes.

Room for improvement

In theory, the system’s 900 pins could consume up to 2700W. However, in practice the system uses 700 W when in motion. Due to friction, the pins maintain their static position without power, so the power consumption to maintain a shape is less than 300 W. Aside power consumption that could be increased with increased resolution (number of pins and their density), it also suffers from problems with heat dissipation since the system requires two rows of five 120 mm fans to cool the actuators.

inFORM system could also get improved with introduction of more sensors, such as multiple cameras, markers, or touch sensors could be used to replace or enhance the camera touch tracking. Tools with embedded RF or other types of markers could be used to enhance the interaction capabilities of the system.

For more information and many more details, you can read the paper presented on ACM Symposium on User Interface Software and Technology: “inFORM: Dynamic Physical Affordances and Constraints through Shape and Object Actuation” [456KB PDF] .

Hello,

I am student of fei-Brazil and I need to build a haptic interface similar to you. Could you help me with some information?

By the way, forgive my English. :)

Regards,

Roberto.