Skinput uses sound to turn your body into an input device

In our previous article we wrote about a new material which gives the sense of touch, and here is an invention with a different twist. Since devices with increasingly larger computational power and various capabilities are becoming much smaller, they can be easily carried on our bodies. However, their small size typically leads to limited interaction space and consequently reduces their usability and functionality.

In our previous article we wrote about a new material which gives the sense of touch, and here is an invention with a different twist. Since devices with increasingly larger computational power and various capabilities are becoming much smaller, they can be easily carried on our bodies. However, their small size typically leads to limited interaction space and consequently reduces their usability and functionality.

There have been many suggestions on how to solve this problem as using augmented reality projected onto our glasses or retina, small projectors which can turn the tables and walls into our interactive surface or a combination of a projector and a camera into one of our favorites – SixthSense. However, the researchers Chris Harrison from Carnegie Mellon University, Desney Tan and Dan Morris from Microsoft research, claim there is one surface that has been previous overlooked as an input canvas – our skin.

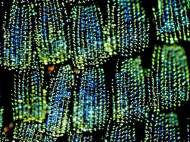

Appropriating the human body as an input device is appealing not only because we have roughly two square meters of external surface area, but also because much of it is easily accessible by our hands (e.g., arms, upper legs, torso). Furthermore, proprioception (our sense of how our body is configured in three-dimensional space) allows us to accurately interact with our bodies in an eyes-free manner. Few external input devices can claim this accurate, eyes-free input characteristic and provide such a large interaction area.

The user needs to wear an armband, which contains a very small projector that projects a menu or keypad onto a person’s hand or forearm. The armband also contains an acoustic sensor. The acoustic sensor is used because when you tap different parts of your body, it makes unique sounds based on the area’s bone density, soft tissue, joints and other factors.

The software in Skinput is able to analyze the sound frequencies picked up by the acoustic sensor and then determine which button the user has just tapped. Wireless Bluetooth technology then transmits the information to the device. So if you tapped out a phone number, the wireless technology would send that data to your phone to make the call. Harrison claims they have achieved accuracies ranging from 81.5 to 96.8 percent and enough buttons to control many devices.

We think it’s not a question wheatear to use Skinput or SixthSense, because both technologies should be incorporated along with some features from their competition in order to make a practical interface. While SixthSense could perform better in loud environments and offers more features, the Skinput doesn’t require any markers to be worn and it is more suitable for persons with sight impairments, since it is much easier to operate it even with your eyes closed.

Great Advance in Electronics.

Dr.A.Jagadeesh Nellore(AP),India

Nice website but need to be updated regularly