Robots who tidy up your room learning to handle new objects

Researchers in Cornell’s Personal Robotics Lab have developed a new algorithm which enables a robot to rely on its artificial intelligence to look at a group of objects instead recognizing single objects placed in front of its sensors. The algorithm enables the robot to survey its surroundings, identify all the objects, figure out where they belong and hopefully estimate the adequate place where it should move them.

Researchers in Cornell’s Personal Robotics Lab have developed a new algorithm which enables a robot to rely on its artificial intelligence to look at a group of objects instead recognizing single objects placed in front of its sensors. The algorithm enables the robot to survey its surroundings, identify all the objects, figure out where they belong and hopefully estimate the adequate place where it should move them.

The researchers tested placing dishes, books, clothing and toys on tables and in bookshelves, dish racks, refrigerators and closets. The robot was up to 98 percent successful in identifying and placing objects it had seen before. It was able to place objects it had never seen before, but success rates fell to an average of 80 percent. Ambiguously shaped objects, such as clothing and shoes, were most often misidentified.

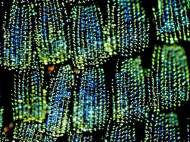

The robot uses a Microsoft Kinect 3D camera to survey the room and creates an overall image of its environment by overlapping, or “stitching “, individual images together. Formed image is divided into blocks by the robot’s computer, and the blocks are based on potential displacements detected in discontinuities of color and shape. For each block it computes the probability of a match with each object in its database and chooses the most likely match.

For each recognized object the robot examines the target area to decide on an appropriate and stable placement in a process similar to displacement detection. It divides the recorded 3D image of the target space into small chunks and computes how items fit into that free space by considering the shape of the object it’s placing.

The robot learns how to behave because it is fed with graphic simulations in which placement sites are labeled as good and bad, and it builds a model of what good placement sites have in common, and chooses the spot with the closest fit to that model. Finally the robot creates a graphic simulation of how to move the object to its final location and carries out those movements.

According to Ashutosh Saxena, assistant professor of computer science at Cornell who leads the group that developed the algorithm, the robot relies on the captured image so a bowl could be detected as a globe. Performance could be improved with cameras that provide higher-resolution images, introduction of tactile feedback, and by pre-programming the robot with 3D models of the objects it is going to handle, rather than leaving it to create its own model from what it sees.

As one of their next steps, Saxena and his Personal Robotics team plan to add contextual decisions when objects are placed. It could lead to more advanced tiding of the room because it would allow the robot to place objects that are related in some way. For instance, a computer mouse can be placed anywhere on a table, but its place is usually beside the keyboard.

For more information, you can read the paper published in International Journal of Robotics Research: “Learning to Place New Objects in a Scene” [6.51MB PDF].

I know its their own robot, but it is so painfully slow. They should work a lot on its hardware improvement and then optimize their algorithms to work in real-world situations instead making it work in this very slow mode.

My respect for the effort, but even if they make it 100% accurate, the algorithm could be unusable on faster platforms.

The researchers improved this algorithm by enabling robots to take into account where and how humans might stand, sit or work in a room, and place objects in their usual relationship to those imaginary people.

Each object is described in terms of its relationship to a small set of human poses, rather than to the long list of other objects in a scene. A computer learns these relationships by observing 3D images of rooms with objects in them, in which it imagines human figures, placing them in practical relationships with objects and furniture. The computer calculates the distance of objects from various parts of the imagined human figures, and notes the orientation of the objects.

“It is more important for a robot to figure out how an object is to be used by humans, rather than what the object is. One key achievement in this work is using unlabeled data to figure out how humans use a space”, said Saxena.

The researchers tested their method using images of living rooms, kitchens and offices from the Google 3D Warehouse, and later, images of local offices and apartments. Finally, they programmed a robot to carry out the predicted placements in local settings.

Volunteers who were not associated with the project rated the placement of each object for correctness on a scale of 1 to 5. Comparing various algorithms, the researchers found that placements based on human context were more accurate than those based solely in relationships between objects, but the best results of all came from combining human context with object-to-object relationships, with an average score of 4.3.

That sounds far more impressive regarding usability, although I still hope they pay attention to the speed of the process.