Live 3D teleconferencing from USC ICT

The potential utility of three-dimensional video teleconferencing has been dramatized in movies such as Forbidden Planet or the Star Wars. There were many tries to establish this technology but most failed at some point. A recent demonstration by CNN showed television viewers the full body of a remote correspondent transmitted “holographically” to the news studio, appearing to making eye contact with the news anchor. However, the effect was performed with image compositing in postproduction and could only be seen by viewers at home. The Musion Eyeliner system claims holographic “3D” transmission of figures such as Price Charles and Richard Branson in life size to theater stages, but the transmission is simply 2D high definition video projected onto the stage using a Pepper’s Ghost effect only viewable from the theater audience; the real on-stage participant must pretend to see the transmitted person from the correct perspective to help convince the audience of the effect.

The potential utility of three-dimensional video teleconferencing has been dramatized in movies such as Forbidden Planet or the Star Wars. There were many tries to establish this technology but most failed at some point. A recent demonstration by CNN showed television viewers the full body of a remote correspondent transmitted “holographically” to the news studio, appearing to making eye contact with the news anchor. However, the effect was performed with image compositing in postproduction and could only be seen by viewers at home. The Musion Eyeliner system claims holographic “3D” transmission of figures such as Price Charles and Richard Branson in life size to theater stages, but the transmission is simply 2D high definition video projected onto the stage using a Pepper’s Ghost effect only viewable from the theater audience; the real on-stage participant must pretend to see the transmitted person from the correct perspective to help convince the audience of the effect.

The 3D teleconferencing system, made by folks from USC Institute for Creative Technologies, consists of a 3D scanning system to scan the remote participant (RP), 3D display to display the RP, and 2D video link to allow the RP to see their audience.

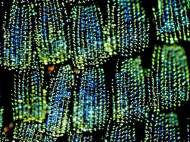

The face of the RP is scanned at 30Hz using a structured light scanning system based on the phase-unwrapping. The system uses a monochrome Point Grey Research Grasshopper camera capturing frames at 120Hz and a greyscale video projector with a frame rate of 120Hz. One half-lit image is subtracted from the other and the zero-crossings across scan lines are detected in order to robustly identify the absolute 3D position of the pixels of the center of the face, allowing the phase unwrapping to begin with robust absolute coordinates for a vertical contour of seed pixels. Conveniently, the maximum of these two half-lit images provides a fully-illuminated texture map for the face, while the minimum of the images approximates the ambient light in the.

The result of the phase unwrapping algorithm is a depth map image for the face, which is transmitted along with the facial texture images at 30Hz to the display computer. The texture image is transmitted at the original 640×480 pixel resolution but gets filtered and down-sampled to 80×60 resolution. This down-sampling is done to reduce the complexity of the polygonal mesh formed by the depth map, since it must be rendered at thousands of frames per second to the 3D display projector.

That information is sent to their auto-stereoscopic 3D display (which we described in our previous article). The system also has 2D Video Feed – an 84 field of view 2D video feed which allows the remote participant to view their audience interacting with their three-dimensional image. A beam-splitter is used to virtually place the camera near the position of the eyes of the 3D RP. The beam-splitter used is one of the protective Lexan transparent shields around the spinning mirror.

The video from the aligned 3D display camera is transmitted to the computer performing 3D scanning of the RP. The scanning computer also performs face tracking during the video stream so 3D display can actually render the correct vertical parallax of the virtual head to everyone in the audience. In addition, the scanning computer displays the video of the audience on a large LCD screen in front of the RP. The screen is approximately 1.8 meters wide, one meter away from the RP, covering an 84° field of view.

The researchers announced potential improvements of the system in the future, such as the quality of the rendered face, adding color or extending the current one-to-many system into one that accommodates any number of remote participates.

This idea may be revolutionary if applied to a larger scale, with a larger mirror. I watched their videos and really liked what the concept can do. Here is my review: http://www.thehdstandard.com/streaming-technology/3d-video-teleconferencing/

Catalin

Professional Streaming Consultant